Three months of production experience with Claude + MCP teaches you things that no documentation covers. The retry patterns that actually work. The system prompts that reduce hallucinated tool calls. The caching strategies that cut your bill in half. The error classes you will encounter and the ones that silently corrupt output. This lesson consolidates those hard-won patterns into a reference you can apply directly.

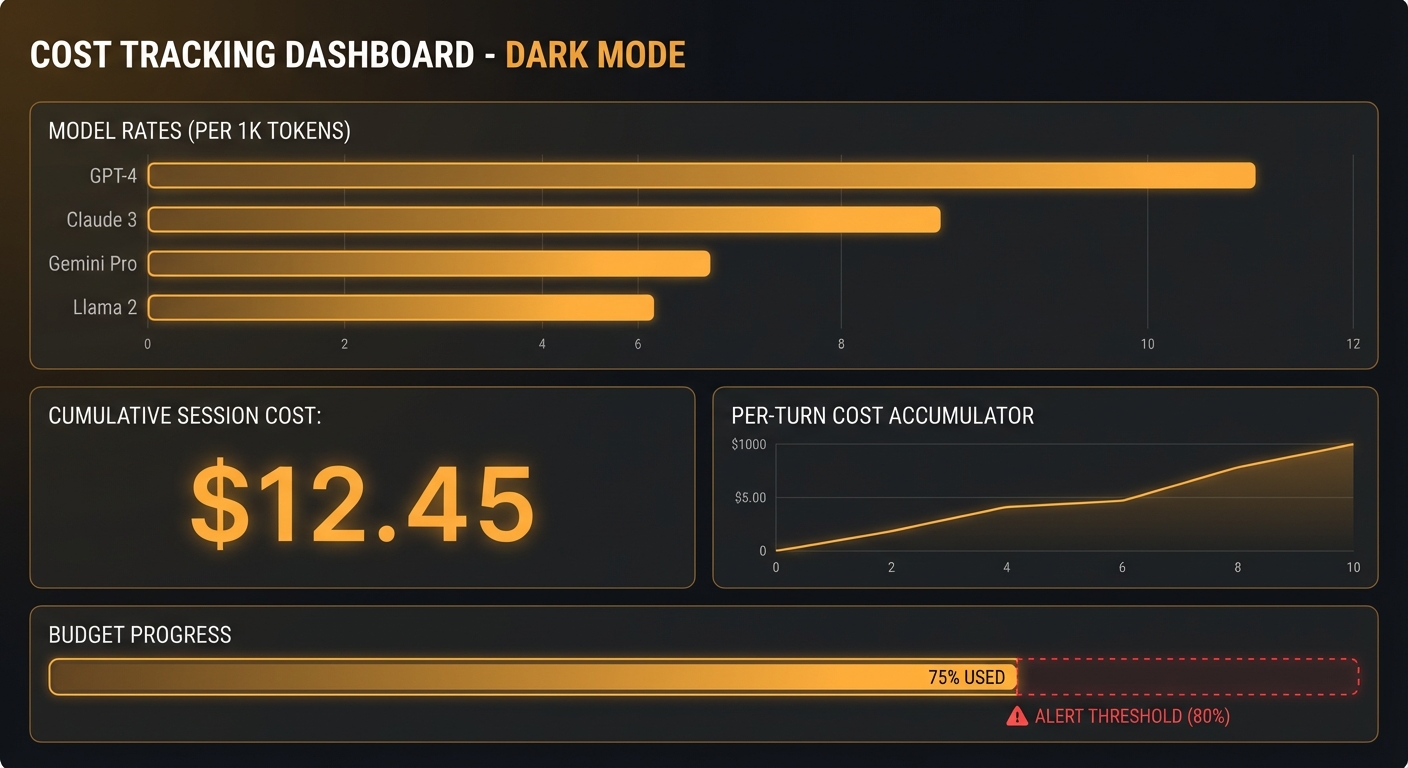

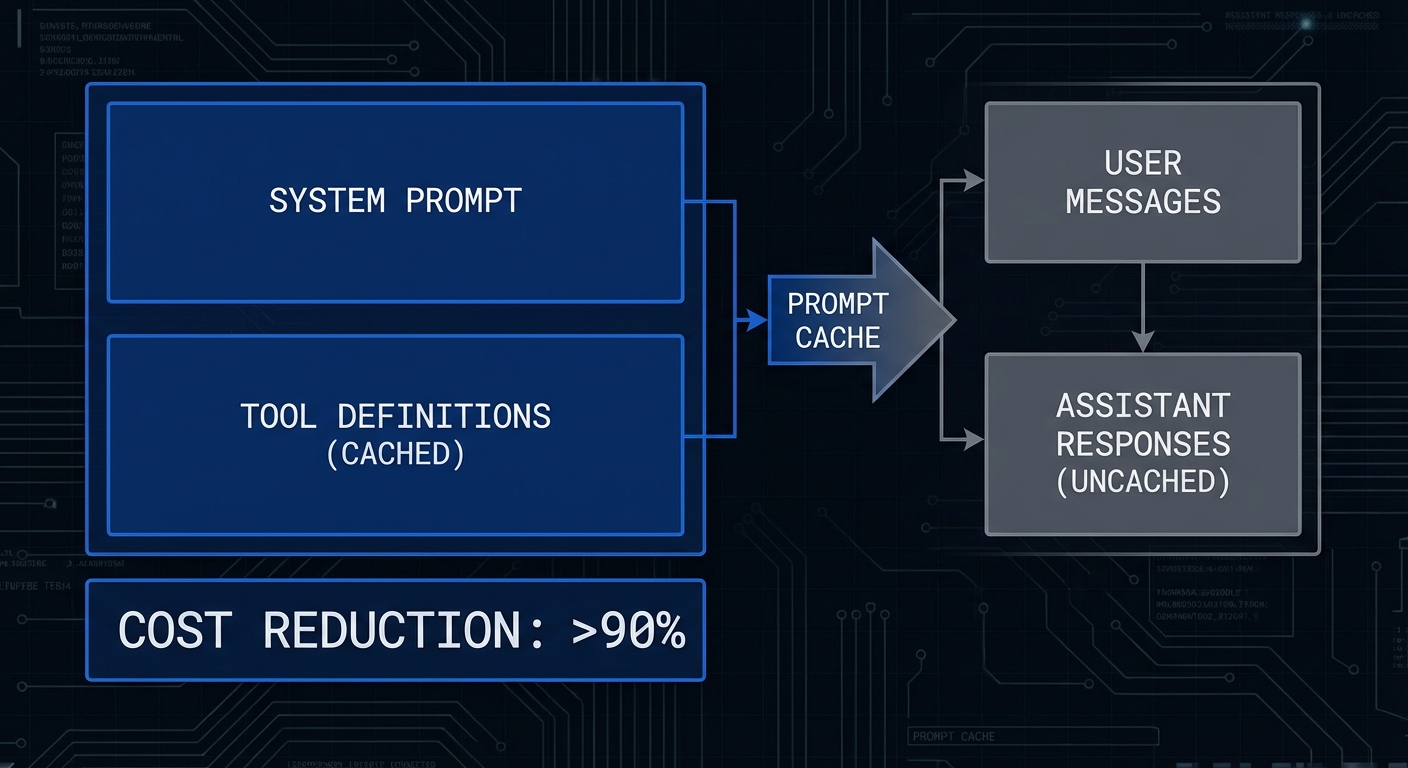

Prompt Caching for Cost Reduction

Anthropic’s prompt caching feature caches portions of the input prompt that do not change between requests. For MCP applications, the tool definitions and system prompt are perfect candidates – they are typically the same for every user in a session. Caching them can reduce costs by 50-90% on repeated calls.

// Enable prompt caching for stable content

const response = await anthropic.messages.create({

model: 'claude-3-5-sonnet-20241022',

max_tokens: 4096,

system: [

{

type: 'text',

text: `You are a helpful assistant with access to our product database and order management system.

Always verify product availability before confirming orders.

Format all prices in USD.`,

cache_control: { type: 'ephemeral' }, // Cache this system prompt

},

],

tools: claudeTools.map(t => ({

...t,

// Cache tool definitions - they rarely change

...(claudeTools.indexOf(t) === claudeTools.length - 1

? { cache_control: { type: 'ephemeral' } } // Cache after last tool definition

: {}),

})),

messages,

});

// Check cache performance in usage stats

const usage = response.usage;

console.error(`Cache: ${usage.cache_read_input_tokens} hit, ${usage.cache_creation_input_tokens} created`);

In practice, the 5-minute rolling cache window means that prompt caching works best for active sessions with frequent back-and-forth. For batch jobs or infrequent requests, the cache expires between calls and you will not see meaningful savings.

“Prompt caching enables you to cache portions of your prompt. Cached data is stored server-side for a rolling 5-minute period, after which it expires. Cache hits save 90% of input token costs for the cached portion.” – Anthropic Documentation, Prompt Caching

System Prompt Patterns That Work

Claude responds better to system prompts that describe the persona, define tool usage rules, specify output format, and set boundaries – in that order. Vague system prompts produce vague tool use.

const PRODUCTION_SYSTEM_PROMPT = `You are a precise product research assistant for TechStore.

TOOL USAGE RULES:

1. Always call search_products before making any recommendations

2. For price comparisons, call get_product_price for each product separately

3. If a product has less than 3 reviews, note "limited reviews" in your response

4. Never recommend products that are out of stock (use check_availability first)

5. If tools return errors, explain what you could not verify rather than guessing

OUTPUT FORMAT:

- Lead with the recommendation, then supporting evidence

- Include price, rating, and availability for each recommended product

- Use bullet points for product comparisons

- End with "Note: Stock and prices verified at [current timestamp]"

BOUNDARIES:

- You can only recommend products from our catalogue

- Do not speculate about products not in the search results

- If the user asks for something outside our catalogue, say so clearly`;

A well-structured system prompt is the single highest-leverage improvement you can make to a Claude + MCP integration. Vague prompts like “be helpful and use tools when needed” produce erratic tool usage. Specific rules about when to call which tool, in what order, and how to handle errors reduce hallucinated tool calls dramatically.

Production Error Taxonomy

// Claude API errors and how to handle them

// 429 - Rate limit: retry with exponential backoff

// 529 - Overloaded: retry with longer backoff (Anthropic load)

// 400 - Bad request: check tool schema, messages format, max_tokens

// 401 - Auth error: check ANTHROPIC_API_KEY

// 413 - Request too large: trim context or summarize conversation history

// Non-error patterns to watch:

// stop_reason === 'max_tokens' - response was cut off, increase max_tokens

// stop_reason === 'end_turn' but no text - model may be stuck, check context

async function callClaudeWithRetry(params, maxRetries = 3) {

for (let attempt = 1; attempt <= maxRetries; attempt++) {

try {

return await anthropic.messages.create(params);

} catch (err) {

const shouldRetry = err.status === 429 || err.status === 529 || err.status >= 500;

if (!shouldRetry || attempt === maxRetries) throw err;

const delay = Math.min(1000 * Math.pow(2, attempt), 30000);

const retryAfter = err.headers?.['retry-after']

? parseInt(err.headers['retry-after']) * 1000

: delay;

console.error(`[claude] Attempt ${attempt} failed (${err.status}), retrying in ${retryAfter}ms`);

await new Promise(r => setTimeout(r, retryAfter));

}

}

}

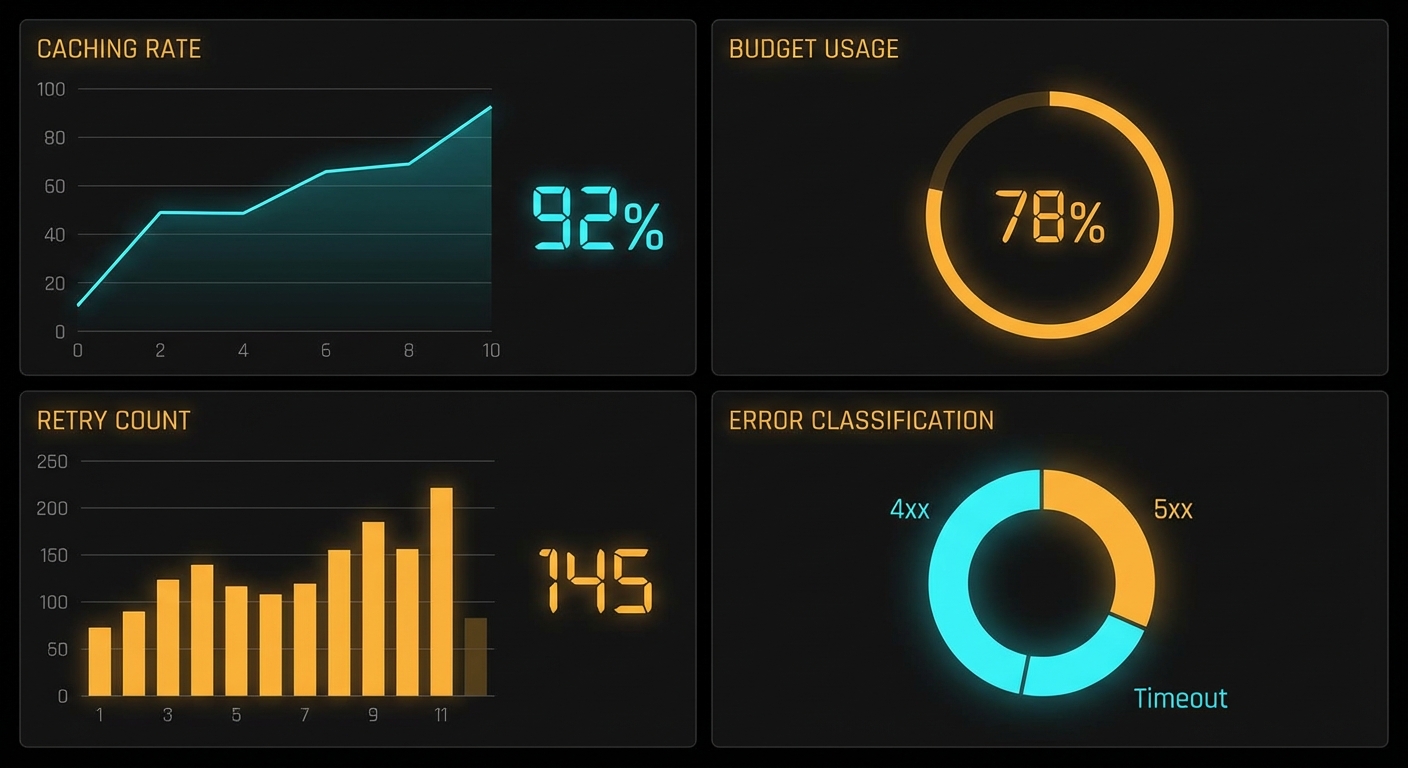

Rate limiting (429) is the error you will hit most often during development and load testing. Anthropic’s rate limits are per-organization, so one runaway script can block your entire team. Always implement retry logic before you start scaling, not after.

Context Management for Long Conversations

// Summarise old conversation history when approaching context limits

// Claude 3.5 Sonnet context: 200K tokens (allows very long conversations)

// But cost grows linearly with context - summarize for efficiency

async function summariseHistory(messages, anthropicClient) {

const summaryRequest = await anthropicClient.messages.create({

model: 'claude-3-5-haiku-20241022', // Use cheaper model for summarisation

max_tokens: 500,

messages: [

...messages,

{ role: 'user', content: 'Summarise our conversation so far in 3 bullet points, preserving all key facts found via tool calls.' },

],

});

return summaryRequest.content[0].text;

}

// In your main conversation loop, check token usage:

if (response.usage.input_tokens > 50000) {

const summary = await summariseHistory(messages, anthropic);

messages = [{ role: 'user', content: `Previous conversation summary:\n${summary}` }];

}

Context summarization is a cost optimization, not just a technical constraint. Even if your conversation fits within Claude’s 200K context window, sending 100K tokens per request is expensive. Summarizing early keeps your per-request cost predictable and your latency consistent.

Failure Mode: model Outputting Tool Calls That Do Not Exist

// Claude occasionally hallucinates tool names, especially if tool descriptions are vague

// Guard against this at the execution layer

const toolNames = new Set(mcpTools.map(t => t.name));

for (const toolUse of toolUseBlocks) {

if (!toolNames.has(toolUse.name)) {

console.error(`[warn] Claude called non-existent tool: ${toolUse.name}`);

toolResults.push({

type: 'tool_result',

tool_use_id: toolUse.id,

content: [{ type: 'text', text: `Tool '${toolUse.name}' does not exist. Available tools: ${[...toolNames].join(', ')}` }],

is_error: true,

});

continue;

}

// ... execute valid tool

}

Hallucinated tool names are surprisingly common when your server exposes many tools with similar names, or when tool descriptions are ambiguous. The validation pattern above is cheap insurance: a few lines of code that prevent cascading failures from a single bad tool call.

What to Check Right Now

- Enable prompt caching – add

cache_control: { type: 'ephemeral' }to your system prompt and the last tool definition. Check theusage.cache_read_input_tokensto measure savings. - Add a tool existence check – validate every tool name Claude returns before attempting to execute it via MCP. Hallucinated tool calls happen in production.

- Monitor stop reasons – log every

stop_reason. A high rate ofmax_tokensstops means you need to increasemax_tokensor summarize context sooner. - Measure prompt cache hit rates – aim for >70% cache hit rate in sustained conversations. Low hit rates mean your “static” content is actually varying between calls.

nJoy 😉