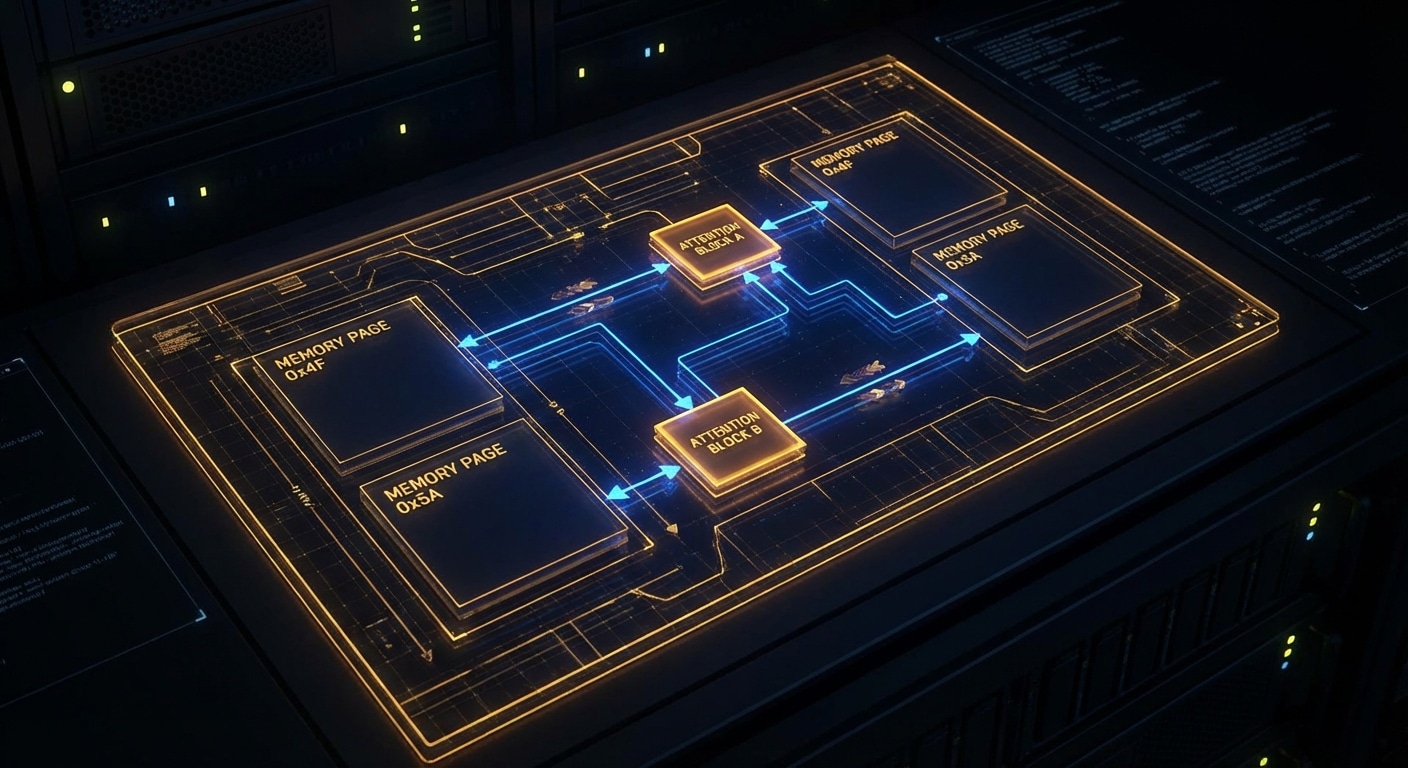

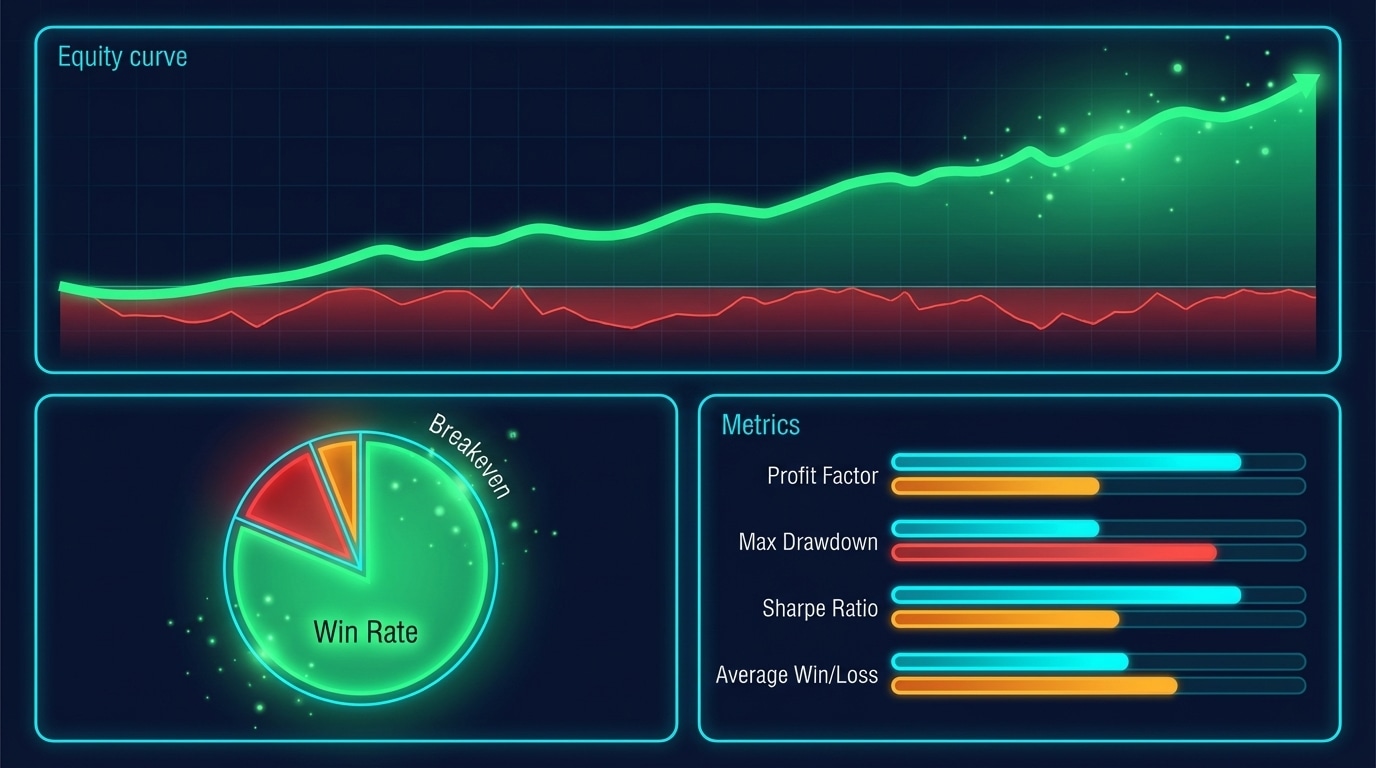

There are four main ways to run LLM inference today, each aimed at a different use case. vLLM is the performance king for multi-user APIs: PagedAttention, continuous batching, and an OpenAI-compatible server. You run it on a GPU server, point clients at it, and scale by adding more replicas. Hugging Face Text Generation Inference (TGI) is in the same league, also batching and an API, with strong support for Hugging Face models and built-in tooling. Choose vLLM when you want maximum throughput and flexibility; choose TGI when you’re already in the HF ecosystem and want a one-command deploy.

Ollama is the “just run it” option on a Mac or PC. You install one binary, run ollama run llama3, and get a local chat and an API. It handles model download, quantization, and a simple server. No batching to speak of, it’s one request at a time, but for dev and personal use that’s fine. llama.cpp is the library underneath many local runners: C++, CPU and GPU, minimal dependencies, and the reference for quantization (GGUF, Q4_K_M, etc.). You use llama.cpp when you’re embedding inference in an app or need maximum control and portability.

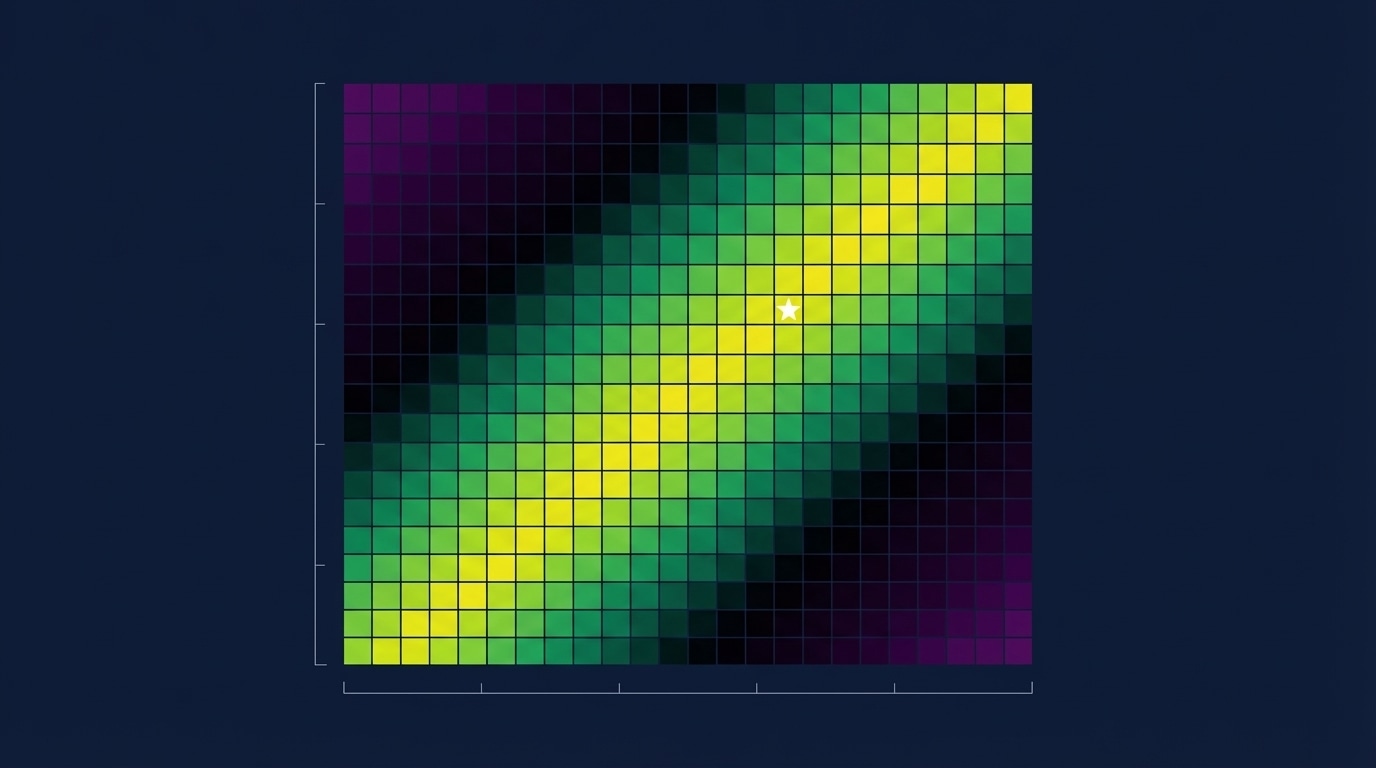

Rough rule of thumb: API product or multi-user service → vLLM or TGI. Local tinkering and demos → Ollama. Custom app, embedded, or research → llama.cpp.

The landscape is still moving: new entrants, mergers of ideas (e.g. speculative decoding everywhere), and more focus on latency and cost. Picking one stack now doesn’t lock you in forever, but understanding the tradeoffs helps you ship without over-engineering or under-provisioning.

nJoy 😉