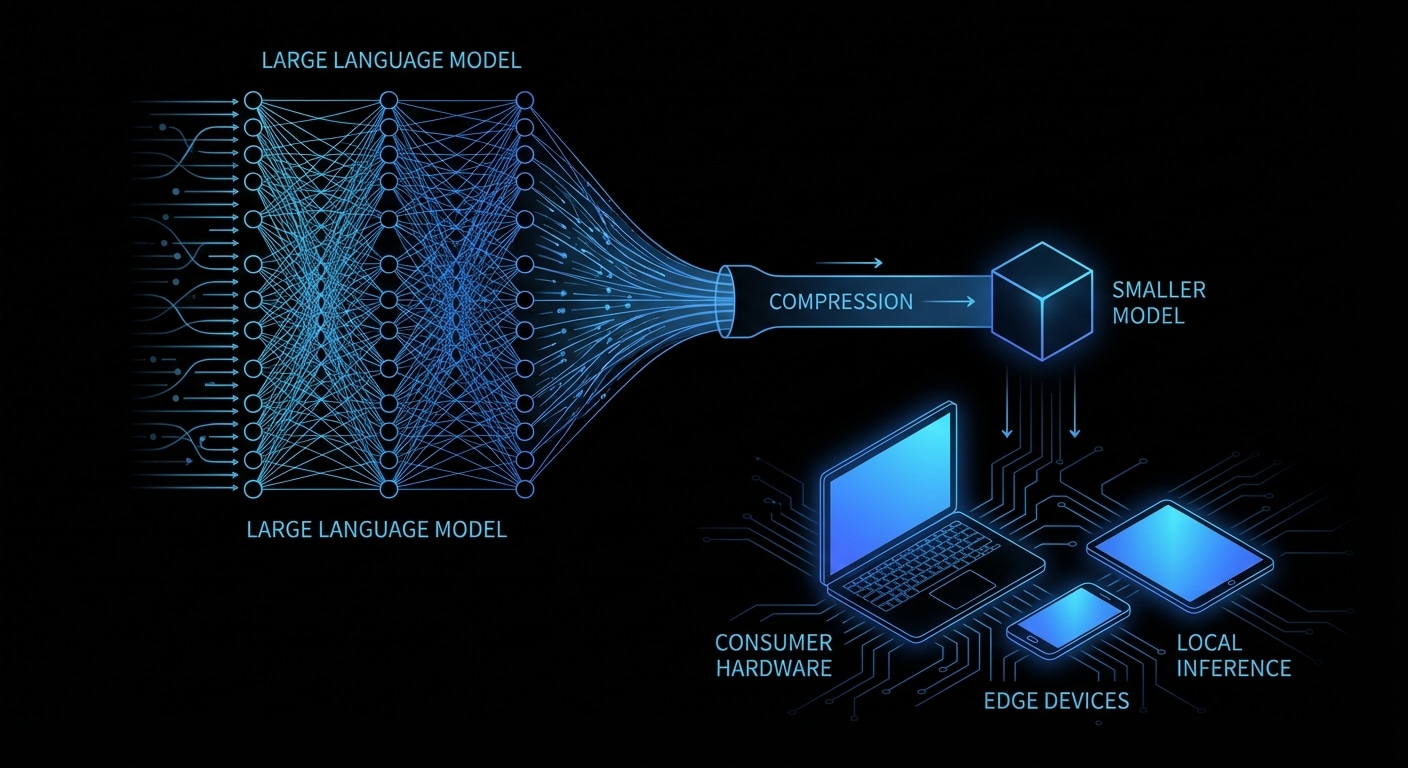

A 70B model in full 16-bit precision needs about 140 GB of VRAM. Almost no consumer card has that. Quantization reduces the bit width of the weights (and sometimes activations) so the same model fits in far less memory and runs faster. 8-bit cuts memory in half with a small quality drop; 4-bit (e.g. GPTQ, AWQ, or GGUF Q4_K_M) gets you to roughly a quarter of the size, so a 70B model can run on a 24 GB GPU or a high-end Mac. You’re trading a bit of numerical precision for accessibility.

The math is simple in principle: map float16 weights to a small set of integers (e.g. 0–15 for 4-bit), store those, and at runtime dequantize on the fly or use integer kernels. The art is in how you choose the mapping, per-tensor, per-group, or per-channel, and whether you calibrate on data (GPTQ, AWQ) to minimise error where it matters most. GGUF is a file format that stores quantized weights and metadata so that llama.cpp and others can load them without re-running the quantizer.

In practice you download a pre-quantized model (e.g. from Hugging Face), load it in vLLM, Ollama, or llama.cpp, and run. You might see a small drop in coherence or reasoning on hard tasks; for most chat and tool use it’s fine.

New formats and methods (e.g. 3-bit, mixed precision) will keep pushing the frontier. If you’re on a single machine, quantization is what makes 70B and beyond possible. If you’re in the cloud, it’s what makes those models cheap to serve.

Quantization is the key that unlocked running 70B and larger models on consumer hardware; the next step is making those quantized models even faster and more accurate.

nJoy 😉