Every few years, something happens in computing that quietly reshapes everything around it. The UNIX pipe. HTTP. REST. The transformer architecture. And now, in 2026, the Model Context Protocol. If you build software and you haven’t internalised MCP yet, this is your moment. This course will fix that – thoroughly.

What This Course Is

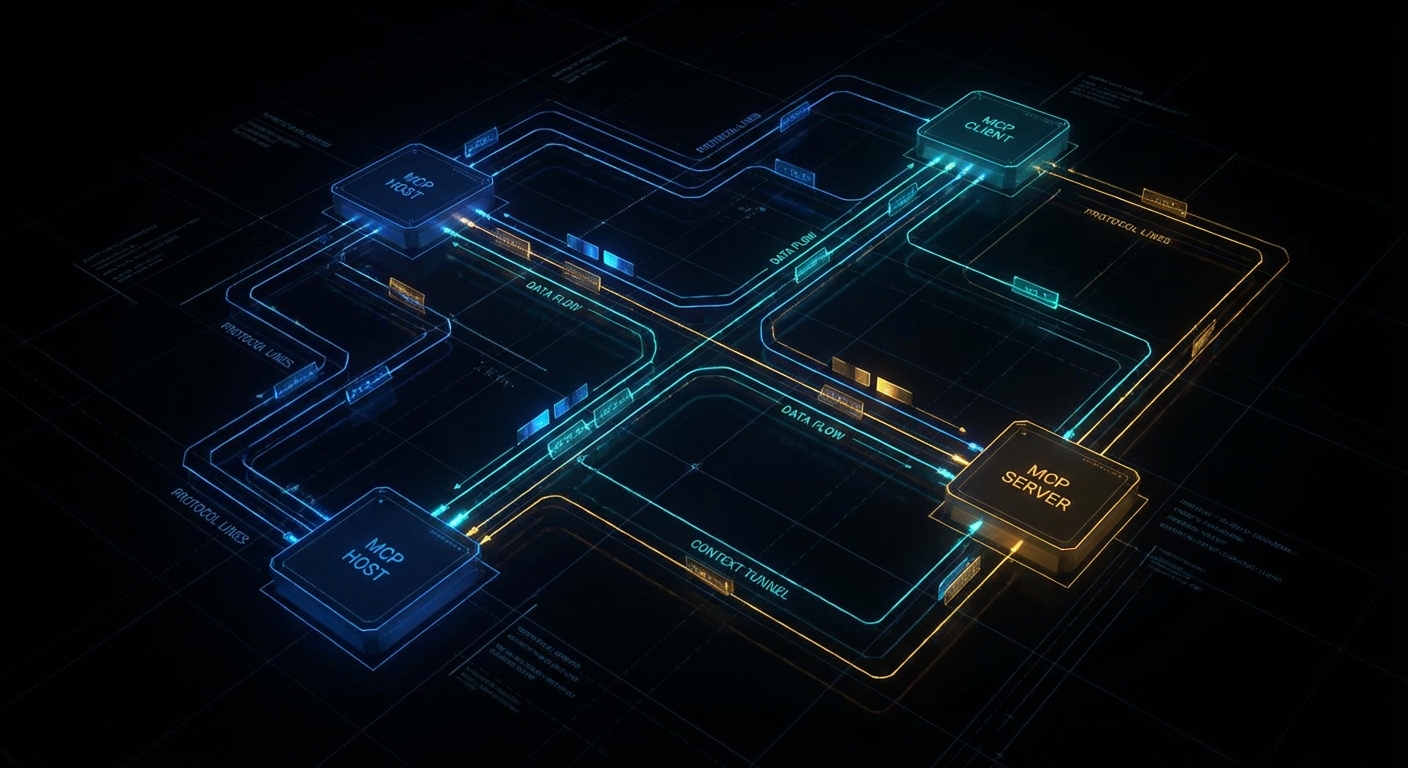

This is a full university-grade course on the Model Context Protocol – the open standard, published by Anthropic and now maintained by a broad coalition, that lets AI models talk to tools, data sources, and services in a structured, secure, and interoperable way. Think of it as HTTP for AI context: before HTTP, every web server spoke its own dialect; after HTTP, the whole web could talk to each other. MCP does the same thing for the agentic AI layer.

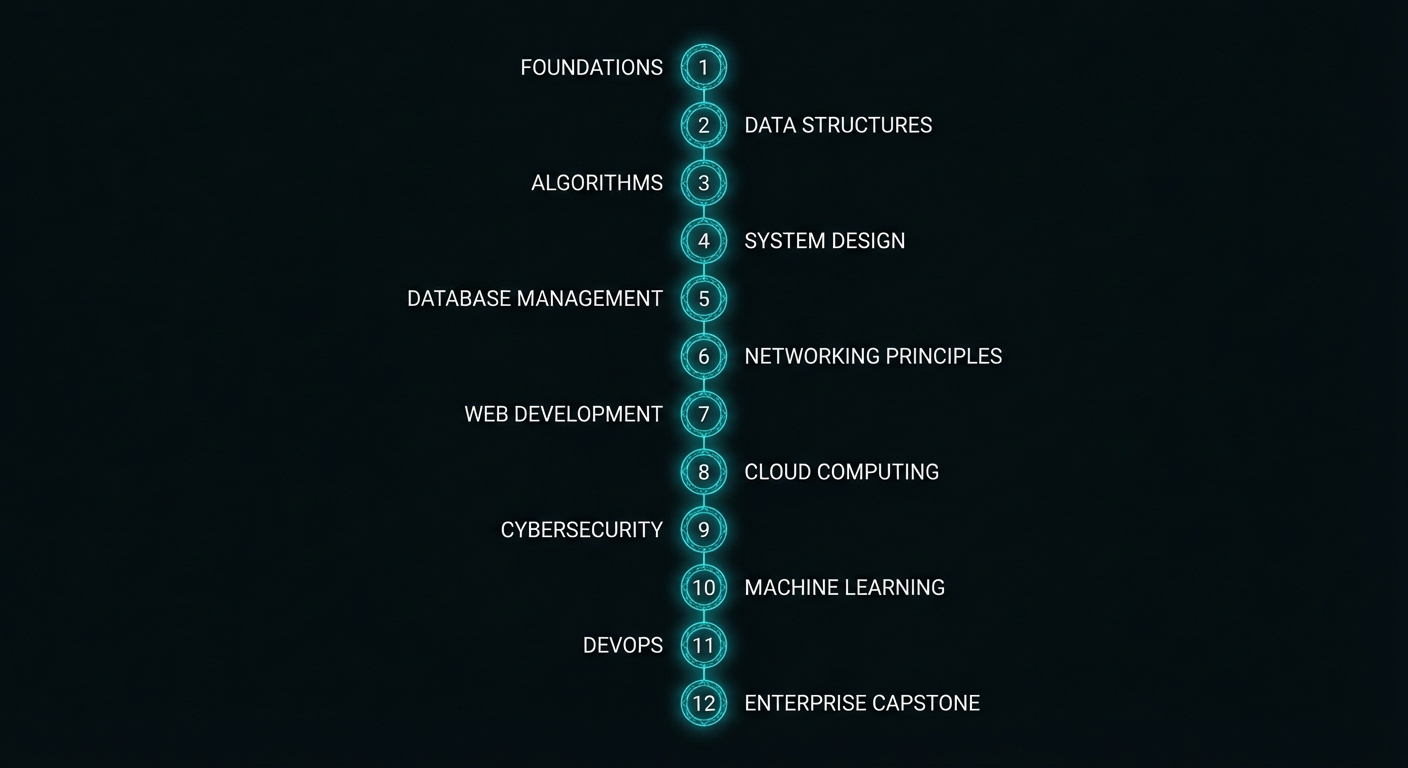

The course runs 53 lessons across 12 Parts, from zero to enterprise. Part I gives you the mental model and the first working server in under an hour. Part XII has you building a full production MCP platform with a registry, an API gateway, and multi-agent orchestration. Everything in between is ordered by dependency – no lesson assumes knowledge that hasn’t been covered yet.

“MCP provides a standardized way for applications to: build composable integrations and workflows, expose tools and capabilities to AI systems, share contextual information with language models.” – Model Context Protocol Specification, Anthropic

All code is in plain Node.js 22 ESM – no TypeScript, no compilation step, no tsconfig to wrestle with. You run node server.js and it works. The point is to teach MCP, not the type system. Where types genuinely help (complex tool schema shapes), JSDoc hints appear inline. Everywhere else, the code is clean signal.

Who This Is For

The course was designed for two audiences who need the same rigour but come at it differently:

- University students – third or fourth year CS, AI, or software engineering. You know how to write async JavaScript. You’ve used an LLM API. You want to understand the architecture that makes production agentic systems work, not just the vibes.

- Professional engineers and architects – you’re building AI-powered products or evaluating MCP for your organisation. You need the protocol internals, the security model, the enterprise deployment patterns, and a clear comparison of how OpenAI, Anthropic Claude, and Google Gemini each implement the standard differently.

If you’re a beginner to programming, start with the Node.js fundamentals first. If you’re already shipping LLM features to production, you can start from Part IV (provider integrations) and backfill the protocol theory as needed.

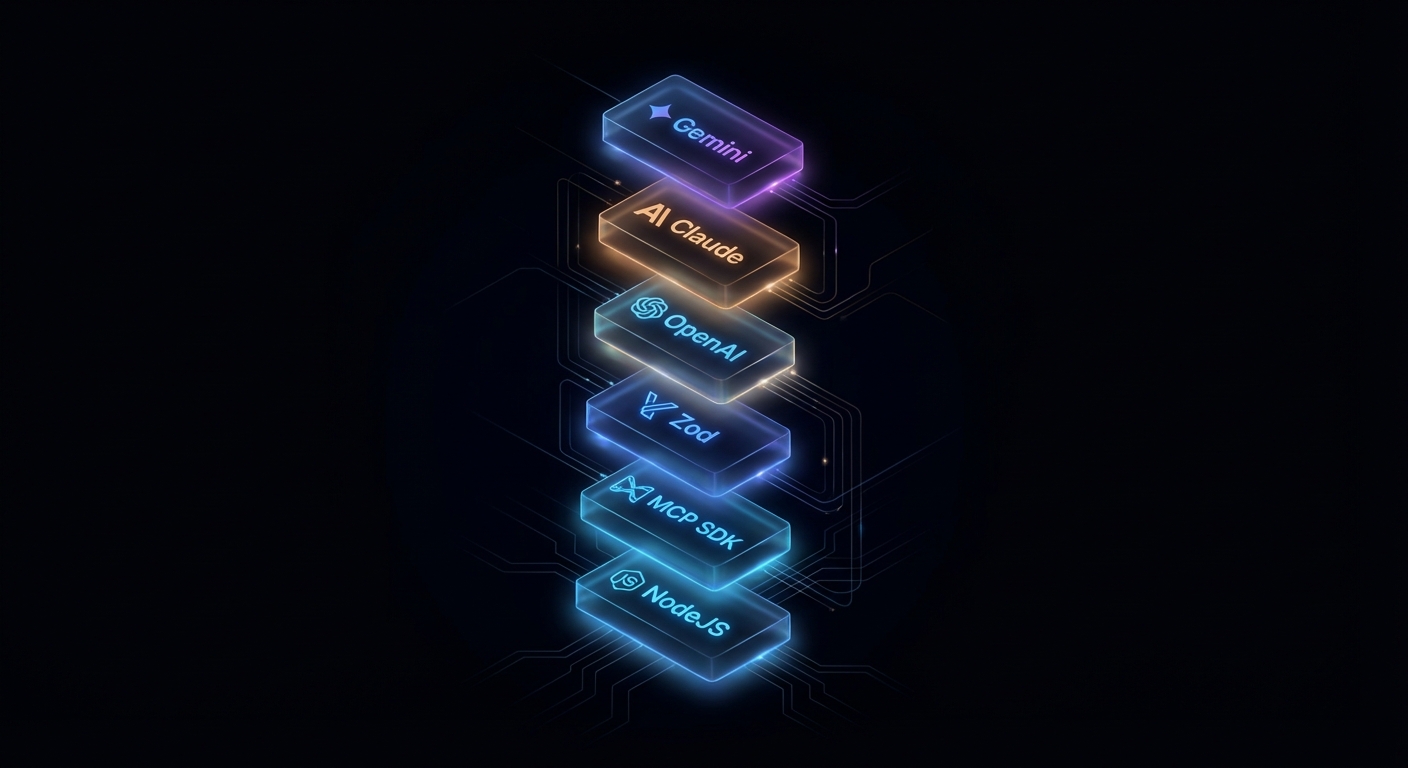

The Technology Stack

Every lesson uses the same stack throughout, so you never lose time context-switching:

- Runtime: Node.js 22+ with native ESM (

"type": "module") - MCP SDK:

@modelcontextprotocol/sdkv1 stable (v2 features noted as they ship) - Schema validation:

zodv4 for tool input schemas - HTTP transport:

@modelcontextprotocol/expressor Hono adapter - OpenAI:

openailatest – tool calling with GPT-4o and o3 - Anthropic:

@anthropic-ai/sdklatest – Claude 3.5/3.7 Sonnet - Gemini:

@google/generative-ailatest – Gemini 2.0 Flash and 2.5 Pro - Native Node.js extras:

--env-filefor secrets,node:testfor tests

No framework lock-in beyond the MCP SDK itself. All HTTP adapter code works with plain Node.js http if you prefer – the adapter packages are convenience wrappers, not requirements.

Course Curriculum

Fifty-three lessons across twelve parts. Links will go live as each lesson publishes.

Part I: Foundations

- What is MCP? The Protocol that Unified AI Tool Integration

- Hosts, Clients, and Servers: The Three-Role Model

- Under the Hood: JSON-RPC 2.0, Lifecycle, and Capability Negotiation

- Node.js Dev Environment: SDK, Zod, ESM, and Tooling

- Your First MCP Server and Client in Node.js

Part II: Core Server Primitives

- Tools: Defining, Validating, and Executing LLM-Callable Functions

- Resources: Exposing Static and Dynamic Data to AI Models

- Prompts: Reusable Templates and Workflow Fragments

- Sampling: Server-Initiated LLM Calls and Recursive AI

- Elicitation: Asking the User for Input from Inside a Server

- Roots: Filesystem and URI Boundaries

Part III: Transports

- stdio Transport: The Local Standard and When to Use It

- Streamable HTTP and SSE: Building Remote MCP Servers

- HTTP Adapters: Express, Hono, and the Node Middleware

- Transport Security: TLS, CORS, and Host Header Validation

Part IV: OpenAI Integration

- OpenAI + MCP: Tool Calling with GPT-4o and o3

- Streaming Completions and Structured Outputs with MCP Tools

- Responses API and the Agents SDK + MCP

- Building a Production OpenAI-Powered MCP Client

Part V: Anthropic Claude Integration

- Claude 3.5/3.7 + MCP: Native Tool Calling

- Extended Thinking Mode with MCP Tools

- Claude Code and Agent Skills: MCP in the Era of Autonomous Coding

- Patterns and Anti-Patterns: Claude + MCP in Production

Part VI: Google Gemini Integration

- Gemini 2.0/2.5 Pro + MCP: Function Calling at Scale

- Multi-modal MCP: Images, PDFs, and Audio with Gemini

- Google AI Studio, Vertex AI, and MCP Servers

- Building a Production Gemini-Powered MCP Client

Part VII: Cross-Provider Patterns

- OpenAI vs Claude vs Gemini: The Definitive MCP Tool Calling Comparison

- Provider Abstraction: A Node.js Library for Multi-Provider MCP Clients

- Choosing the Right Model: A Decision Framework for MCP Applications

Part VIII: Security and Trust

- OAuth 2.0 with MCP: Authentication for Remote Servers

- Authorization, Scope Consent, and Incremental Permissions

- Tool Safety: Input Validation, Sandboxing, and Execution Limits

- Secrets Management: Vault, Environment Variables, and Rotation

- Audit Logging, Compliance, and Data Privacy

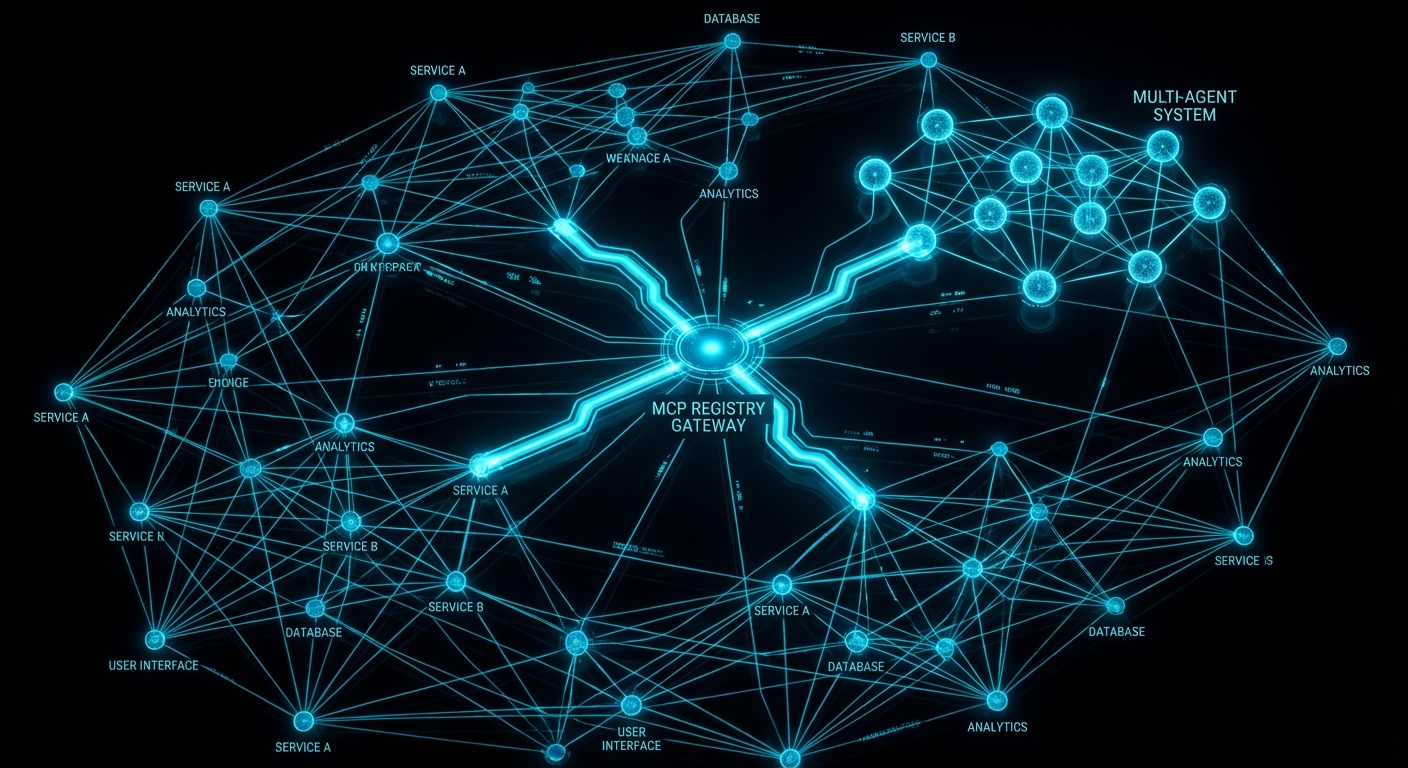

Part IX: Multi-Agent Systems

- Agent-to-Agent (A2A) Protocol: MCP in Multi-Agent Architectures

- MCP + LangChain and LangGraph: Orchestration Patterns in Node.js

- Reliable Agent Pipelines: State, Memory, and Checkpoints

- Failure Modes: Loops, Hallucinations, and Cascades in MCP Agents

Part X: Enterprise Patterns

- Production Deployment: Docker, Health Checks, and Graceful Shutdown

- Scaling MCP: Load Balancing, Rate Limiting, and Caching

- Observability: Logging, Metrics, and Distributed Tracing

- CI/CD for MCP Servers: Testing, Versioning, and Zero-Downtime Deploy

- MCP Registry, Discovery, and Service Mesh Patterns

Part XI: Advanced Protocol Features

- Tasks API: Long-Running Async Operations

- Cancellation, Progress, and Backpressure in MCP Streams

- Protocol Versioning, Backwards Compatibility, and Migration

- Writing Custom Transports and Protocol Extensions

Part XII: Capstone Projects

- Project 1: PostgreSQL Query Agent with OpenAI + MCP

- Project 2: File System Agent with Claude + MCP

- Project 3: A Multi-Provider API Integration Hub

- Project 4: Enterprise AI Assistant with Auth, RBAC, and Audit Logging

- Capstone: Building a Full MCP Platform – Registry, Gateway, and Agents

How the Lessons Are Written

Each lesson is designed to be self-contained and longer than comfortable. The goal is that a reader who sits down with the article and a terminal open will finish knowing how to do the thing, not just knowing that the thing exists. That means:

- Named failure cases – every lesson covers what goes wrong, specifically, with the exact code that triggers it and the exact fix. Learning from bad examples sticks better than learning from good ones.

- Official source quotes – every lesson cites the MCP specification, SDK documentation, or relevant RFC directly. The wording is exact, not paraphrased. The link goes to the actual source document.

- Working code – every code block runs. It is tested against the actual SDK version noted at the top of the lesson. Nothing is pseudo-code unless explicitly labelled.

- Balance – where a technique has valid alternatives, the lesson says so. A reader should leave knowing when to use the thing taught, and when not to.

“The key words MUST, MUST NOT, REQUIRED, SHALL, SHALL NOT, SHOULD, SHOULD NOT, RECOMMENDED, NOT RECOMMENDED, MAY, and OPTIONAL in this document are to be interpreted as described in BCP 14 when, and only when, they appear in all capitals.” – MCP Specification, Protocol Conventions

The course is sourced from over 77 videos across six major MCP playlists from channels including theailanguage, Microsoft Developer, and CampusX – then substantially expanded with code, official spec references, and architectural analysis that the videos don’t cover. The videos are the floor, not the ceiling.

What to Check Right Now

- Verify Node.js 22+ – run

node --version. If you’re below 22, install via nodejs.org ornvm install 22. - Install yt-dlp (optional, for running the research tooling) –

brew install yt-dlporpip install yt-dlp. - Get API keys before Part IV – OpenAI, Anthropic, and Google AI Studio keys. Store them in

.envfiles, never in code. - Bookmark the MCP spec – modelcontextprotocol.io/specification. You’ll refer to it constantly.

- Start with Lesson 1 – even if you’ve used LLM tool calling before, the framing in the first three lessons will change how you think about it.

nJoy 😉