LLMs are great at adding: new features, new branches, new error messages. They’re bad at removing or simplifying. When you ask for a change, they tend to append code or add another condition rather than delete dead paths or consolidate duplicates. That’s partly training (most edits in the wild are additive) and partly the nature of autoregressive generation: you’re always “continuing” the text, not rewriting it. So the codebase drifts: more branches, more flags, more special cases, and the implicit state machine (what states exist, what transitions are valid) slowly diverges from what you thought you had.

The additive trap shows up in control flow: you add a new state or transition and forget to add the corresponding cleanup, timeout, or error path. Or you add a new “success” path but the old “failure” path now leads nowhere. The model doesn’t reason over the full graph; it fills in the local request. So you get stuck states, unreachable code, or two flags that can both be true when they shouldn’t be. Tests that only cover the happy path won’t catch these — you need a view of the structure.

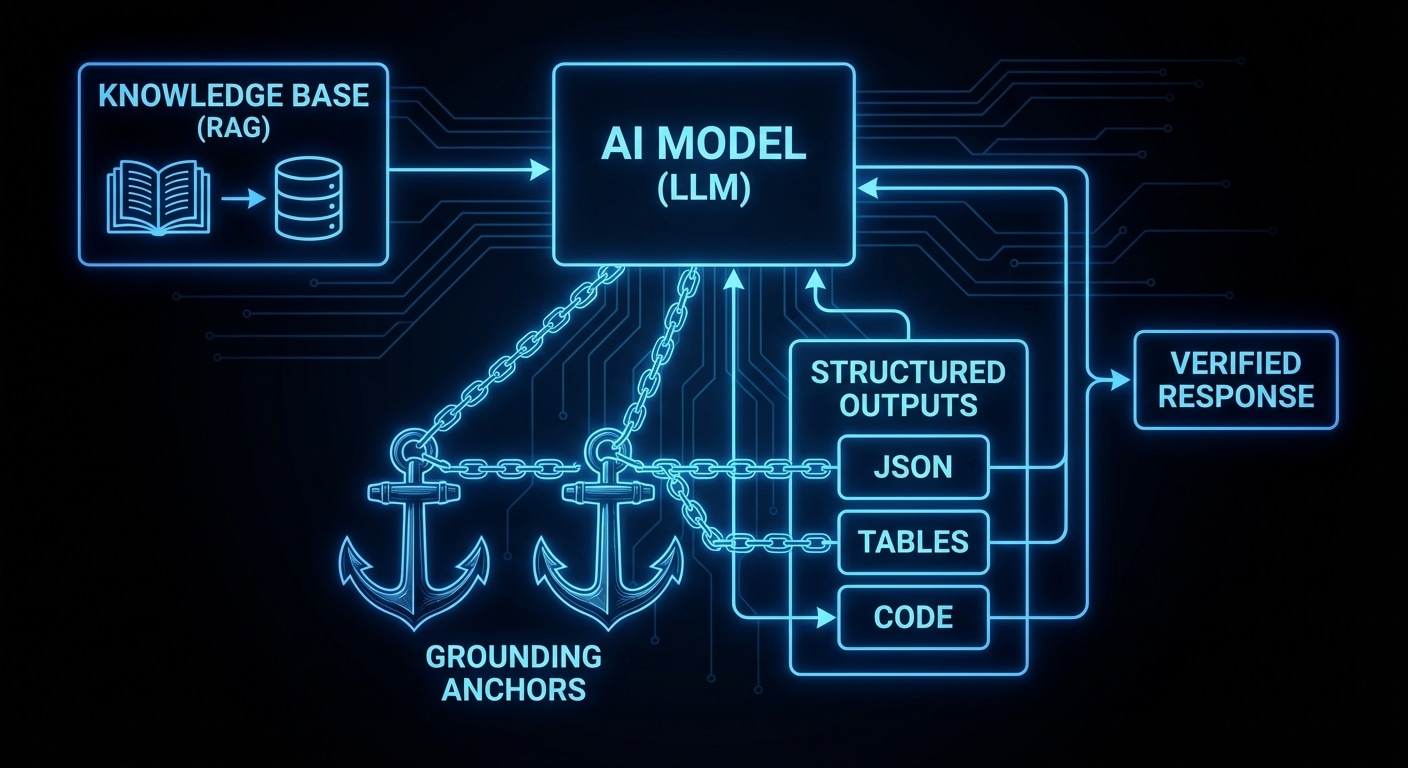

What would help: tools or disciplines that force a “structure pass.” After the model suggests a change, something checks: are all states covered? Are there new branches with no error handling? Are there conflicting flags? That could be a linter, a custom checker, or a formal spec that you diff against. The key is to treat “shape” as a first-class concern, not just “does it run in one scenario.”

Until we have that, the best mitigation is to use the model for small, localized edits and to do structural review yourself. When you add a state or a branch, explicitly ask: what’s the reverse path? What cleans up? What happens on failure? The model won’t ask for you.

Expect more research and tooling on “structural correctness” of generated code and on ways to make the additive trap visible and fixable.

nJoy 😉