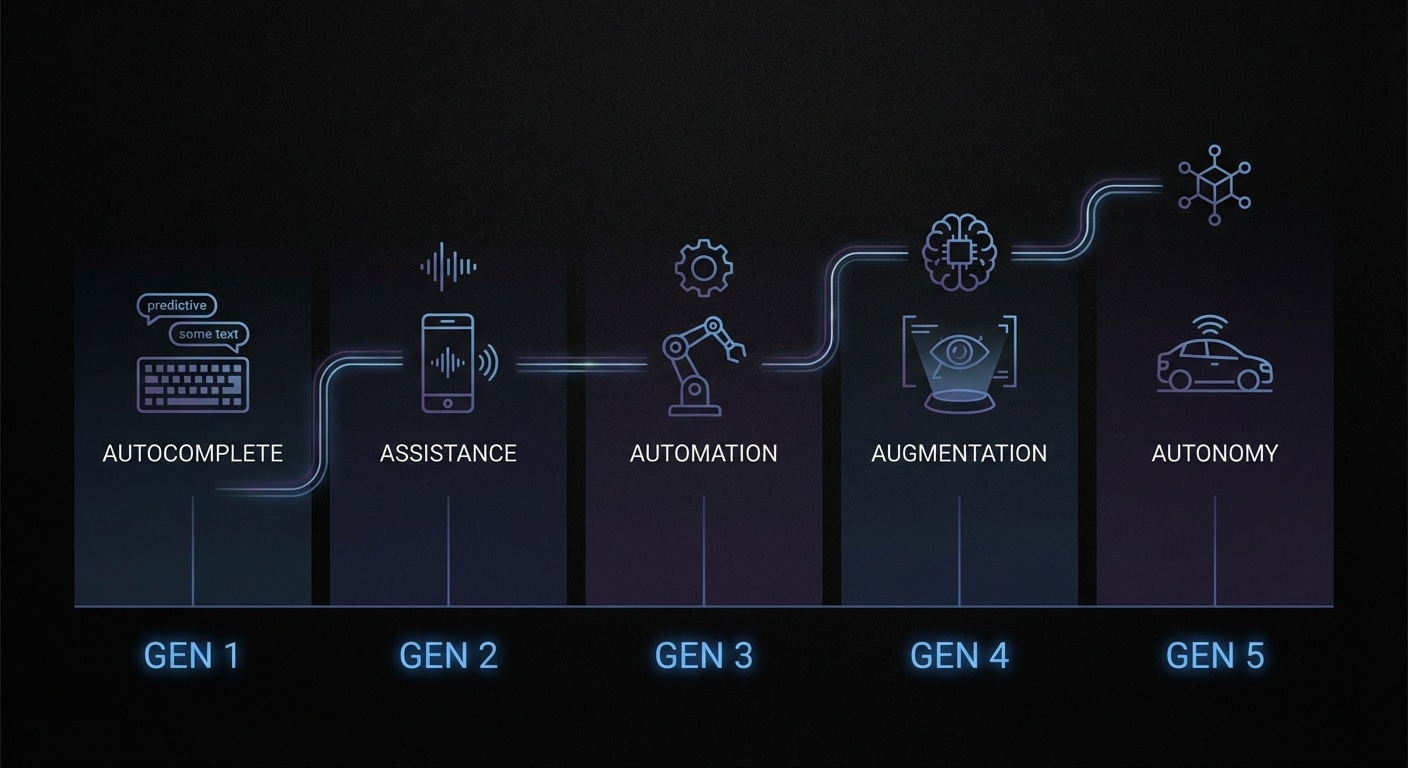

AI coding tools have evolved in waves. First was autocomplete: suggest the next token or line from context. Then came inline suggestions (Copilot-style): whole lines or blocks. Then chat-in-editor: ask a question and get a snippet. Then agents: the model can run tools, read files, and make multiple edits to reach a goal. Each wave added autonomy and scope; each wave also added the risk of wrong or brittle code. So we’ve gone from “finish my line” to “implement this feature” in a few years.

The five generations (you can draw the line slightly differently) are roughly: (1) autocomplete, (2) snippet suggestion, (3) chat + single-shot generation, (4) multi-turn chat with context, (5) agents with tools and persistence. We’re in the fifth now. The next might be agents that can plan across sessions, or that are grounded in formal specs, or that collaborate with structural checkers. The direction is always “more autonomous, more context-aware” — and the challenge is always “more correct, not just more code.”

From autocomplete to autonomy, the user’s job has shifted from writing every character to guiding and verifying. That’s a win for speed and a risk for quality. The teams that get the most out of AI coding are the ones that keep a clear bar for “done” (tests, review, structure) and use the model as a draft engine, not a replacement for design and verification.

The progress is real: we can now say “add a retry with backoff” and get a plausible implementation in seconds. The unfinished work is making that implementation structurally sound and maintainable. That’s where the next generation of tools will focus.

Expect more agentic and multi-step tools, and in parallel more verification and structural tooling to keep the output trustworthy.

nJoy 😉