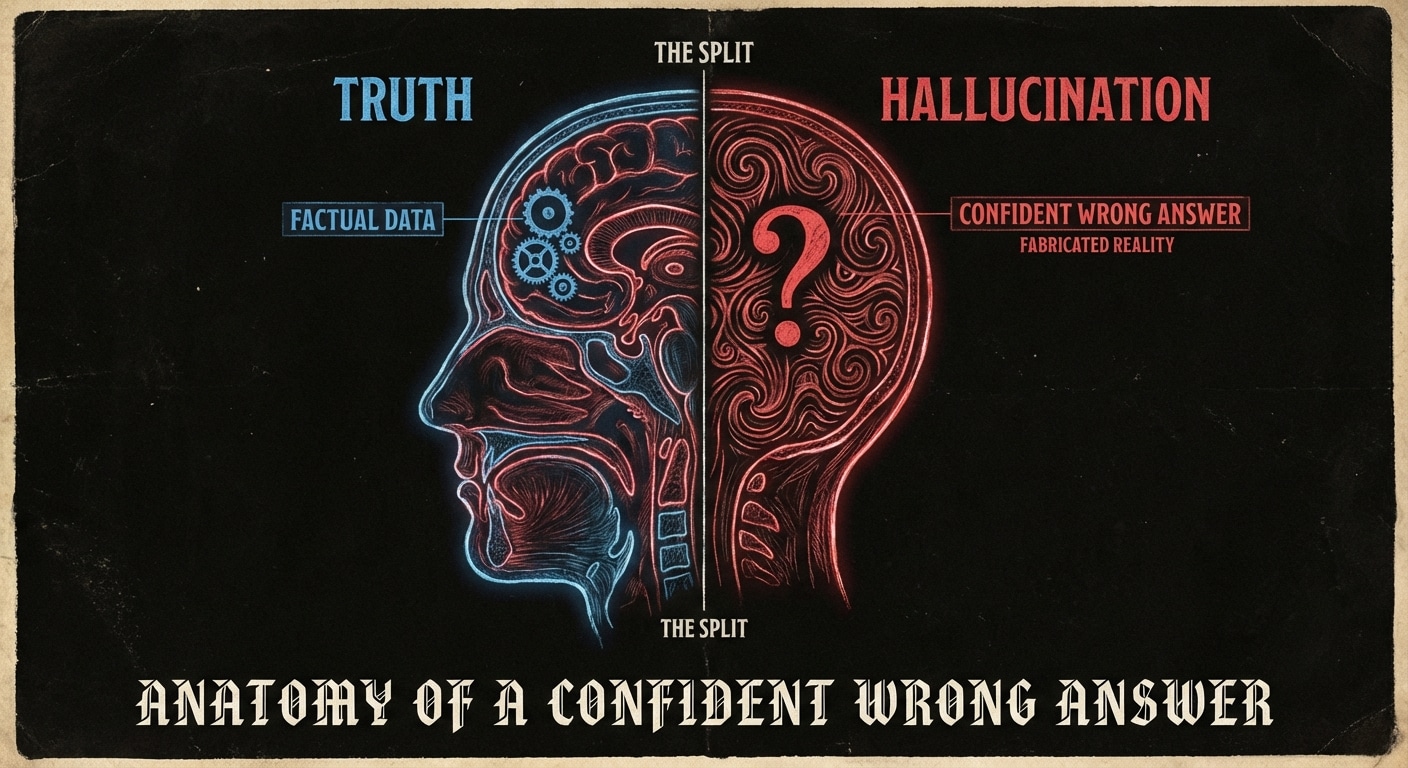

A hallucination is often confident: the model states something wrong with no hedging, in the same tone it uses for correct answers. That’s because the surface form (grammar, style, “authoritative” phrasing) is what the model is optimized for; it doesn’t have a separate channel for “I’m unsure.” So you get “The capital of Mars is Olympus City” or a fake study citation that looks real. The anatomy of such an error: the model chose a high-probability continuation that fits the prompt and prior tokens, and that continuation happened to be false.

Confidence and wrongness can combine in dangerous ways. In code, the model might invent an API that doesn’t exist or a parameter that sounds right but isn’t. In medicine or law, a confident wrong answer can be worse than “I don’t know.” The user often can’t tell the difference until they verify, and many users don’t verify. So the harm is in the pairing: wrong + confident.

Some models are being tuned to hedge or say “I’m not sure” when they’re uncertain, but that’s a band-aid: the model still doesn’t have access to ground truth. The better approach is to not rely on the model’s self-assessment. Use retrieval, tools, and human checks for anything that must be correct. Treat confident-sounding output as “draft” until verified.

In UX you can nudge users: “Always verify facts and code.” In system design you can add guardrails: require citations, or run generated code in a sandbox and check the result. The goal is to make the cost of trusting a hallucination visible and to make verification easy.

Expect more work on uncertainty signaling and citation, but the core lesson remains: confidence and correctness are not the same. Design for that.

nJoy 😉