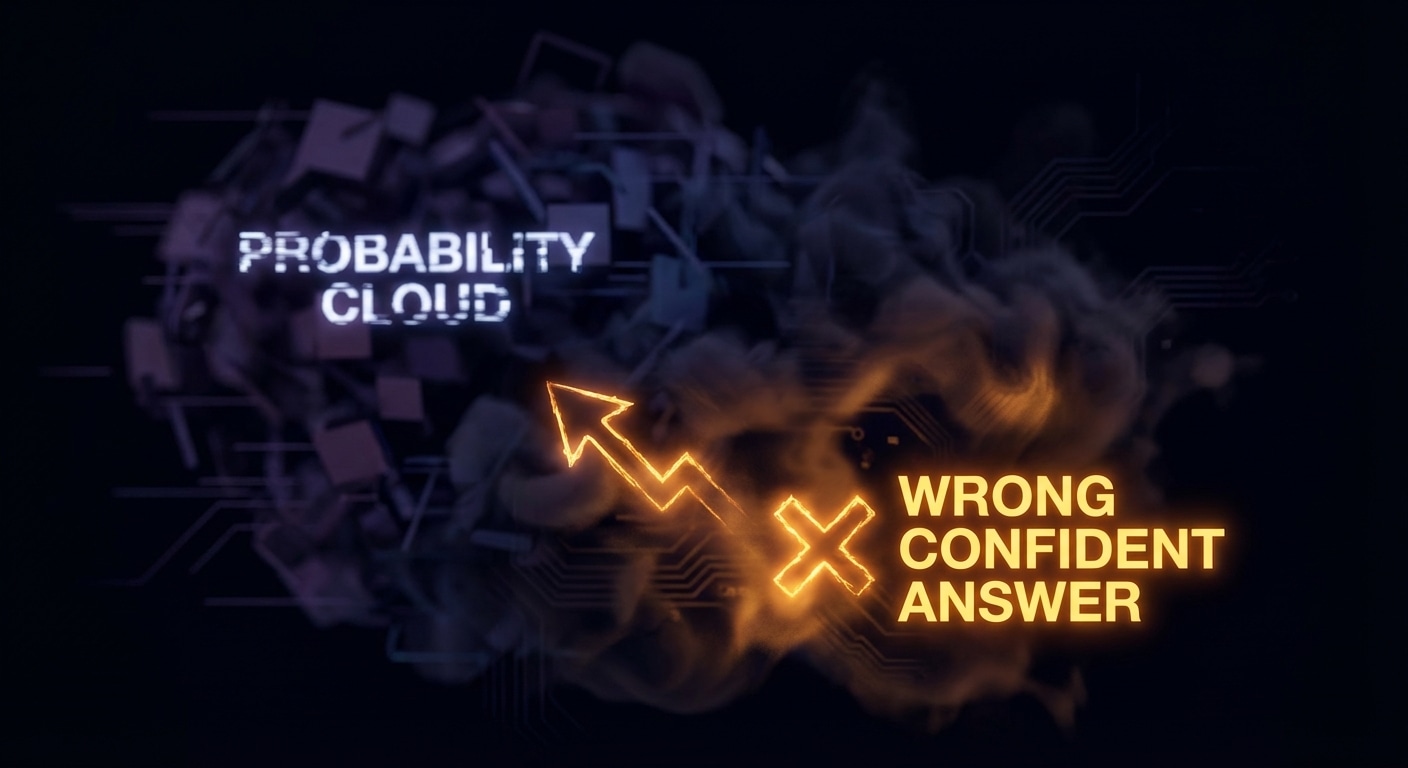

LLMs hallucinate because they’re not “looking up” facts — they’re predicting the next token. The training objective is to assign high probability to plausible continuations given context. Plausible doesn’t mean true: the model has learned patterns like “the capital of X is Y” and “according to study Z,” so it can generate confident, grammatical, and completely false statements. There’s no separate “truth check” in the forward pass; the only signal is statistical.

Why it happens: the model has seen many texts that look authoritative (wrong Wikipedia edits, forum posts, confabulations in training data). It has also learned that sounding confident is rewarded in dialogue. So when it doesn’t know, it often still produces something that “fits” the context and the prompt. Low-probability tokens can still be sampled (especially at higher temperature), so rare or wrong answers can appear. And the model has no persistent memory of “I already said X” — it can contradict itself in the same conversation.

Mitigations are external: RAG (retrieve real docs and put them in context), tool use (call an API or DB instead of inventing), structured output (force a schema so the model has to fill slots), and post-hoc checks (fact-check, cite sources). You can also reduce temperature and use decoding constraints to make the model more conservative, but that doesn’t remove the underlying cause.

Understanding the probabilistic root cause helps you design systems that don’t over-trust the model. Never treat raw model output as ground truth for facts, names, or numbers. Always have a path to verify or ground.

Expect continued work on “truthfulness” and citation in models, but the fundamental issue — next-token prediction is not truth-tracking — will stay. Design around it.

nJoy 😉